60 energy performance certificates with 10 different EPC programs cross-tested

The detailed cross testing of national energy performance certificate (EPC) schemes among the crossCert project partners is the main basis for issuing technical guidelines for the next generation of EPCs, guidelines for people-centred EPCs as well as recommendations for the harmonisation of the certificates in the EU. To achieve this goal, the consortium developed the cross-testing procedure. This methodology enables an assessment of EPC schemes in a quantifiable way.

Cross-testing means calculating the energy performance of buildings of different types and user profiles, ages, structural conditions and technical equipment using partners national EPC calculation software, and comparing the experience and results of each other’s EPC schemes.

However, cross testing is not as straightforward as it seems from a casual glance from the outside. There are major practical obstacles to be overcome for a sound cross testing that enables a comparison of the processes and their results, and that allows the formulation of recommendations. Notably:

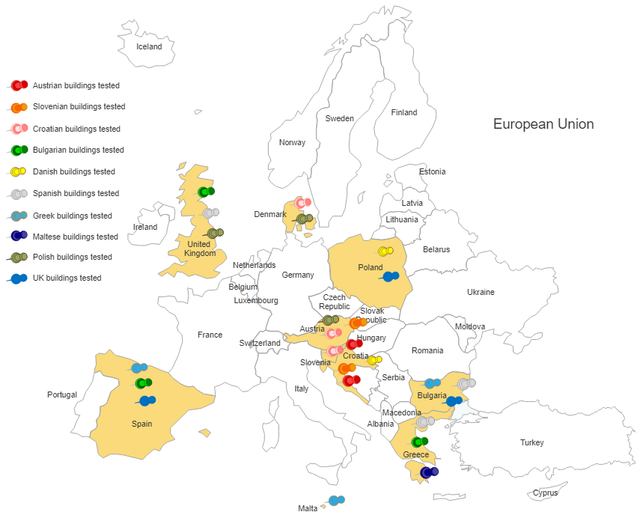

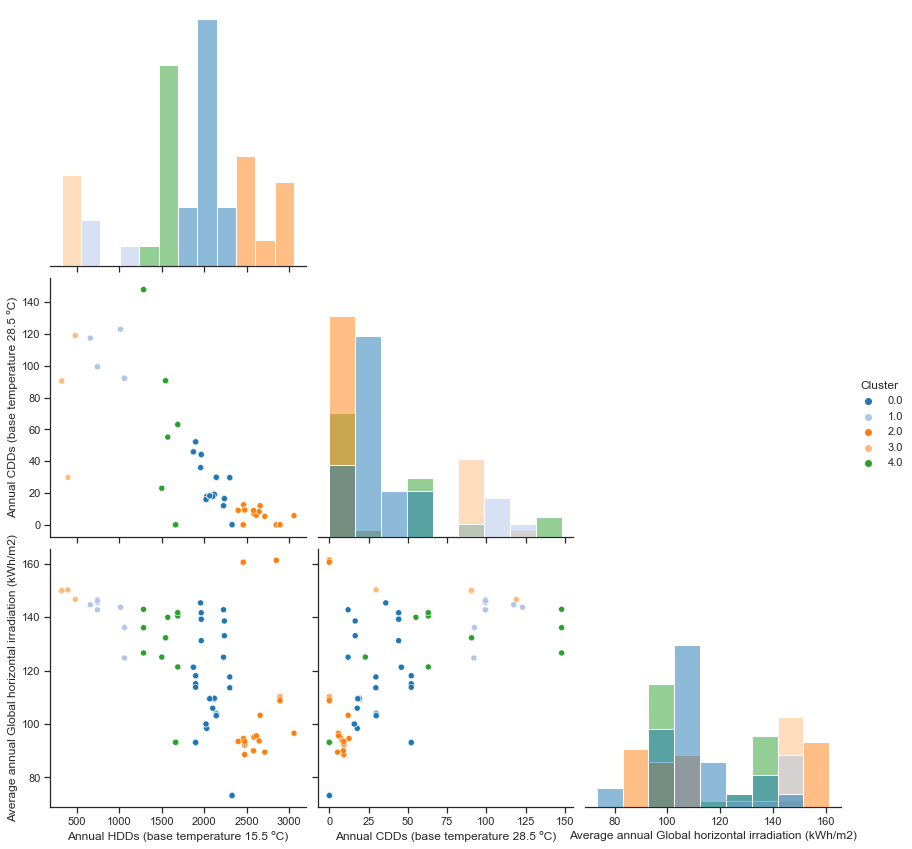

- Widely varying climate conditions across the countries. In most EPC software programs, it is not possible to import data for the climate prevailing at locations in other countries. This limits the applicability of the software used in one country to test buildings of another country. Therefore, partners with similar climate conditions need to be identified and paired for the cross testing.

- Language of the EPC software. The different languages of the calculation programs limit meaningful access from project partners from alternative countries.

- Differences in data requirements. Data requirements are different among countries and the cross testing activities brought this diversity to the forefront.

Initially, cross-testing in crossCert was planned to be carried out by means of in person workshops. Due to the pandemic, however, travelling was not possible, or very difficult, in the early days of the project, and crossCert reverted to testing using computer mediated communications. This raised additional practical challenges for a dynamic and thorough cross-testing experience. The procedure devised to work around this difficulty is eventually delivering the required results, but requires more comprehensive building descriptions and is more time consuming. Nevertheless, the team has currently cross-tested 60 EPCs among 10 different EPC schemes.

The project is already revealing some relevant output.

The direct comparison of numerical results from the several national EPCs has a limited value due to differences in levels of data input, calculation methodologies, user profiles and indicators. Even the definitions of the reference areas used for calculations differ. Thus, the numerical results are better placed in the context of an analysis of the calculation methods behind.

Furthermore, the use of technical terms is not universal. One example is the heat energy demand: Often heat energy demand means the heat used for heating the building and producing hot water, but sometimes hot water production is not included in the definition. Another example is the end energy demand, where there may be differences in the inclusion of lighting or cooling systems.

In some countries, the list of renovation recommendations made by the EPC procedure is general, and it is left to the user to further evaluate and prioritize them. In other countries, the EPC offers very detailed measures with associated costs and payback times, and the possibility to write a customized recommendation report with calculations.

In relation to people-centred aspects, such as user-friendliness for the EPC issuer the extent of data input differs greatly among countries. In some countries, the data input is unduly demanding, very detailed and hard to fulfil. In other countries data input should be more detailed. The cost/benefit ratio between detail and accuracy remains often to be optimized. Another issue to be improved is missing explanation of values in rigorous unequivocal terms, which would improve the user-friendliness for the issuer and the quality of the EPC.

As of user-friendliness of the EPC report for the building owner, there is a lot of improvement potential across the countries. The choice of indicators, their meaning as well as the graphical presentation are among the aspects to be improved.

The qualification requirements for EPC issuers also vary greatly among countries, ranging from few or no requirements to embracing qualification profiles and training including continual quality checks.

Access to EPC results within the country is also very variable. Some countries offer a detailed and public access to EPC data. In Slovenia, for example, there is a publicly accessible map where users can see the buildings and their associated EPC details. In other countries, EPC databases are not available to the public.

Robustness is also an area that requires attention, particularly to decrease error proneness. During the EPC cross-testing, the consortium paid special attention to the robustness of the EPC calculation software, in particular to areas where misunderstandings or ambiguities in data entry could cause errors. We also analysed the possibilities and limits of calculations that closely model the real building and its use. One example is the capacity of the EPC software to change the user profiles or to insert more than one piece of technical equipment.

The certification experiences with calculation programs and schemes of other countries is resulting in an in-depth knowledge of EPC schemes in Europe. The analysis and merging all recognised sources of error and potentials to increase the user-friendliness will result in concrete recommendations to improve the quality and value of EPCs.

As our cross-testing effort continues, we will soon focus on next generation EPC schemes developed in several EU projects, and compiling recommendations for high quality and user-friendly next generation EPCs.

Below: Clustering of buildings and their respective EPC schemes for cross testing according to their climatic zones.